This is the first in two-part series on spectrum basics and how we could better manage the spectrum to encourage innovation and prevent either large corporations or government from interfering with our right to communicate. Part 2 is available here.

We often think of all our wireless communications as traveling separate on paths: television, radio, Wi-Fi, cell phone calls, etc. In fact, these signals are all part of the same continuous electromagnetic spectrum. Different parts of the spectrum have different properties, to be sure - you can see visible light, but not radio waves. But these differences are more a question of degree than a fundamental difference in makeup.

As radio, TV, and other technologies were developed and popularized throughout the 20th century, interference became a major concern. Any two signals using the same band of the spectrum in the same broadcast range would prevent both from being received, which you have likely experienced on your car radio when driving between stations on close frequencies – news and music vying with each other, both alternating with static.

To mitigate the problem, the federal government did what any Econ 101 textbook says you should when you have a “tragedy of the commons” situation in which more people using a resource degrades it for everyone: they assigned property rights. This is why radio stations tend not to interfere with each other now.

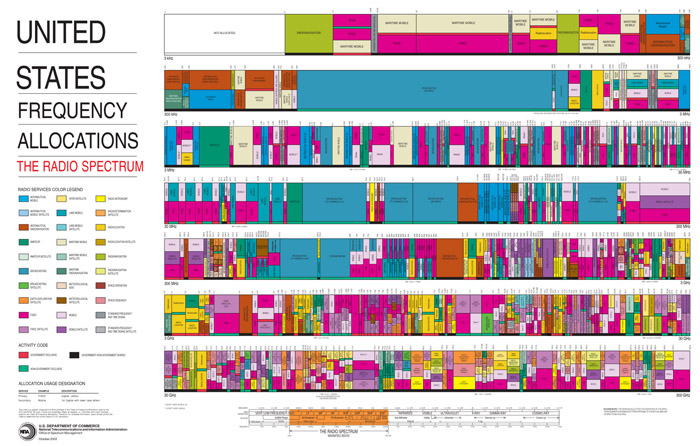

The Federal Communications Commission granted exclusive licenses to the spectrum in slices known as bands to radio, TV, and eventually telecom companies, ensuring that they were the only ones with the legal right to broadcast on a given frequency range within a certain geographic area. Large bands were reserved for military use as well.

Originally, these licenses came free of charge, on the condition that broadcasters meet certain public interest requirements. Beginning in 1993, the government began to run an auction process, allowing companies to bid on spectrum licenses. That practice continues today whenever any space on the spectrum is freed up. (For a more complete explanation of the evolution of licensing see this excellent Benton foundation blog post.)

Although there have been several redistributions over the decades, the basic architecture remains. Communications companies own exclusive licenses for large swaths of the usable spectrum, with most other useful sections reserved for the federal government’s defense and communications purposes (e.g. aviation and maritime navigation). Only a few tiny bands are left open as free, unlicensed territory that anyone can use.

This small unlicensed area is where many of the most innovative technologies of the last several decades have sprung up, including Wi-Fi, Bluetooth, Radio Frequency Identification (RFID), and even garage door openers and cordless phones. A recent report by the Consumer Electronics Association concluded that unlicensed spectrum generates $62 billion in economic activity, and that only takes into account a portion of direct retail sales of devices using the unlicensed spectrum.

On its face, the current spectrum allocation regime appears an obvious solution; an efficient allocation of scarce resources that allows us to consume all kinds of media with minimal interference or confusion, and even raises auction revenues for the government to boot.

Except that the spectrum is not actually a limited resource. Thanks to the constant evolution of broadcasting and receiving technologies, the idea of a finite spectrum has become obsolete, and with it the rationale for the FCC’s exclusive licensing framework. This topic was explored over a decade ago in a Salon article by David Weinberger, in which he interviews David P. Reed, a former MIT Computer Science Professor and early Internet theorist.

Reed describes the fallacy of thinking of interference as something inherent in the signals themselves. Signals travelling on similar frequencies do not physically bump into each other in the air, scrambling the message sent. The signals simply pass through each other, meaning multiple signals can actually be overlaid on each other. (You don’t have to understand why this happens, just know that it does.) Bob Frankston belittles the current exclusive licensing regime as giving monopolies on colors.

As Weinberger puts it:

The problem isn’t with the radio waves. It’s with the receivers: “Interference cannot be defined as a meaningful concept until a receiver tries to separate the signal. It’s the processing that gets confused, and the confusion is highly specific to the particular detector,” Reed says. Interference isn’t a fact of nature. It’s an artifact of particular technologies.

In the past, our relatively primitive hardware-based technologies, such as car radios, could only differentiate signals that were physically separated by vacant spectrum. But with advances in both transmitters and receivers that have increased sensitivity, as well as software that can quickly and seamlessly sense what frequencies are available and make use of them, we can effectively expand the usable range of the spectrum. This approach allows for squeezing more and more communication capacity into any given band as technology advances, without sacrificing the clarity of existing signals. In other words, (specifically those of Kevin Werbach and Aalok Mehta in a recent International Journal of Communications paper) “The effective capacity of the spectrum is a constantly moving target.”

In the next post, we’ll look at how we can take advantage of current and future breakthroughs in wireless technology, and how our outdated approach to spectrum management is limiting important innovation.